Genesys Quality Assurance in 2026: From Manual Sampling to AI-Driven Coaching

Most Genesys contact centers still understand the core limitation of manual QA.

You cannot review enough interactions fast enough to manage quality at the speed of the operation.

A sampled QA program can still catch obvious failures. But it struggles to give leaders broad coverage, fast coaching loops, and confidence that emerging issues are visible before they affect customer outcomes.

That is why Genesys quality assurance is shifting toward AI-assisted review models.

The Problem with Manual QA in a Genesys Environment

Genesys Cloud CX gives teams a unified environment for omnichannel interaction handling and workforce engagement: Genesys Cloud CX.

The challenge is not interaction capture. The challenge is review capacity.

In many Genesys operations, QA still looks like this:

- Review a small sample of calls or chats

- Complete scorecards after the interaction

- Aggregate results weekly or monthly

- Deliver coaching after a long delay

That creates predictable gaps:

- Most interactions are never reviewed

- Coaching arrives too late

- Compliance and experience risk surface slowly

- Trend analysis is based on samples instead of broad evidence

For a high-volume Genesys team, this is less a people problem than an operating model problem.

What AI-Driven QA Changes

AI QA for Genesys changes the shape of the workflow.

Instead of using human reviewers as the first-pass scoring layer, the system evaluates interactions automatically and routes the highest-value cases to analysts, coaches, or supervisors.

This is the logic behind AI QA for Genesys, AutoQA for Genesys, and automated QA for Genesys.

The practical gains are straightforward:

- More interactions evaluated

- Faster review cycles

- Consistent pre-scoring across teams

- Quicker detection of quality and compliance risk

- Better prioritization for human reviewers

The important point is not replacing human judgment. It is relocating human judgment to the moments where it matters most.

Why Coaching Improves When QA Becomes Operational

Manual QA usually produces delayed coaching.

AI-driven QA makes coaching more operational because leaders can identify patterns earlier:

- Which agents struggle with specific contact reasons

- Which teams mishandle compliance language

- Which queues show falling empathy or resolution quality

- Which workflow changes correlate with repeat contacts or escalations

Once you can see those patterns quickly, coaching stops being an end-of-month report and becomes a management input.

That is a major reason high-intent search terms like AutoQA for Genesys, Genesys QA software, and Genesys AI quality assurance matter. Buyers are not only searching for score automation. They are searching for faster intervention.

The Link Between QA, Sentiment, and VoC

Genesys customers often start by looking for better QA. Then they discover the program works better when quality data is connected to customer signal.

Genesys highlights AI-driven conversational analytics for voice and text to help organizations understand sentiment and meaning at scale: Speech and Text Analytics.

That matters because a low-quality interaction is rarely just a score problem.

It can also be:

- A customer feedback problem

- A repeat-contact problem

- A churn-risk problem

- A workflow problem

- A self-service or AI-agent handoff problem

This is why the stronger architecture for Genesys teams is often QA + VoC, not QA in isolation.

What to Measure in a Modern Genesys QA Program

If you are redesigning quality assurance for Genesys Cloud CX, the scoring model should usually include more than process adherence alone.

A more effective model often includes:

- Compliance and policy adherence

- Resolution quality

- Empathy and communication quality

- Hold, transfer, and handoff behavior

- Escalation management

- Customer sentiment or friction context

- Contact reason and repeat-contact pattern

This does not mean every criterion should be automated blindly. It means AI should help teams review at scale and route exceptions intelligently.

How Oversai Fits on Top of Genesys

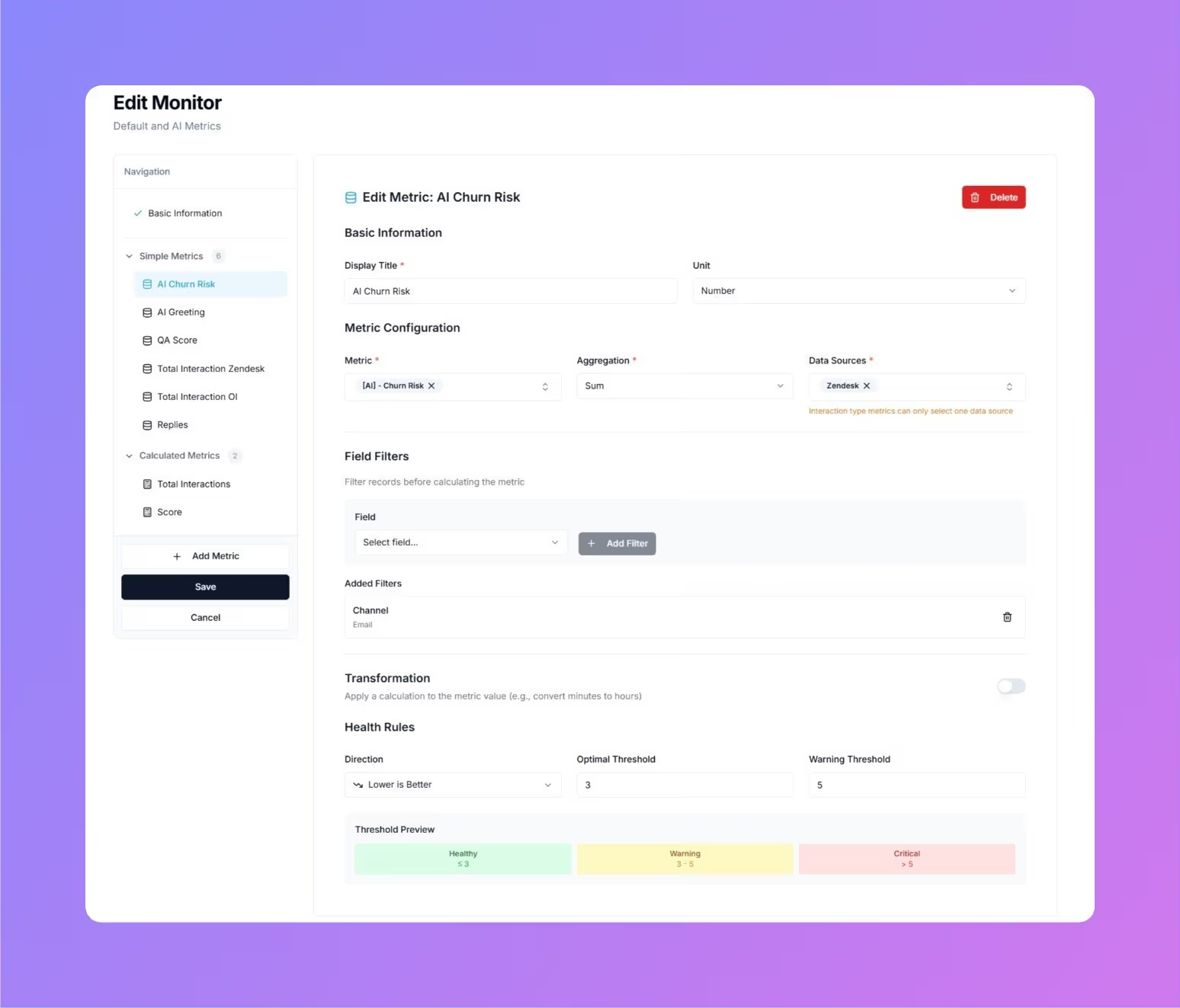

Oversai gives Genesys customers an AI analysis layer designed for quality operations.

Teams can use it to:

- Score Genesys calls and digital conversations against custom QA criteria

- Detect customer sentiment and issue themes on the same interaction

- Flag low-scoring, high-risk, or high-value conversations

- Reduce manual sampling pressure on QA analysts

- Connect coaching to the customer outcome behind the score

That is the value of Genesys quality assurance when it is connected to AutoQA for Genesys and customer feedback analysis.

SEO and Keyword Focus for This Topic

The best supporting keyword cluster for this article includes:

Genesys quality assuranceGenesys QA softwareAI QA for GenesysAutoQA for Genesysautomated QA for GenesysGenesys Cloud CX QAcontact center quality assurance software

These are the phrases most aligned with buyers trying to move away from manual call sampling and toward broader AI-driven coverage.

Bottom Line

Genesys contact centers do not need more sampling discipline. They need a review model that matches the scale and speed of the interaction stream.

AI-driven quality assurance helps teams evaluate more conversations, find risk earlier, and connect coaching to measurable customer outcomes. When that QA layer is also linked to sentiment and VoC signals, leaders gain a clearer picture of what is actually happening inside the operation.

For Genesys customers, that is the shift that matters in 2026: from delayed scorecards to continuous quality insight.

Explore Genesys quality assurance, AI QA for Genesys, and AutoQA for Genesys.