AutoQA Scorecard Criteria: What CX Teams Should Measure in 2026

An AutoQA scorecard is only as good as the criteria behind it.

Many teams automate an old manual QA scorecard and expect transformation. That usually creates faster scoring, but not better customer experience. The scorecard still measures greetings, scripts, process steps, and documentation while missing customer sentiment, resolution quality, root cause, compliance risk, and AI-agent behavior.

Modern CX teams need scorecards that measure what actually predicts trust, loyalty, cost, risk, and customer outcomes.

Quick Answer: What Should an AutoQA Scorecard Measure?

An AutoQA scorecard should measure resolution quality, accuracy, policy adherence, empathy, communication clarity, compliance, customer sentiment, escalation handling, documentation quality, root cause, coaching opportunity, and AI-agent risk when automation is involved.

The best scorecards connect QA criteria to Voice of Customer signals. They do not only ask whether the agent followed the process. They ask whether the customer issue was understood, handled correctly, resolved clearly, and protected from avoidable friction.

Why Traditional QA Scorecards Break in AutoQA

Traditional scorecards were designed for human reviewers sampling a small number of conversations.

That model encouraged criteria that were easy to check manually:

- Did the agent use the greeting?

- Did the agent verify the account?

- Did the agent follow the script?

- Did the agent document the ticket?

- Did the agent close the conversation correctly?

Those questions still matter in some environments. But when a team moves to AutoQA, it can evaluate every conversation and detect richer signals.

AutoQA can answer:

- Was the customer issue actually resolved?

- Did sentiment improve or decline?

- Was the information accurate?

- Was the customer asked to repeat information unnecessarily?

- Did the agent create avoidable repeat contact?

- Did the conversation expose a policy, product, or process defect?

- Did an AI agent hallucinate, overpromise, or fail to escalate?

That is why AutoQA should not simply replicate manual QA. It should upgrade the quality model.

The 12 AutoQA Scorecard Criteria CX Teams Should Use

Use these criteria as a starting framework. The exact weights should reflect your industry, risk profile, channels, and customer promises.

| Criteria | What it measures | Why it matters |

|---|---|---|

| Issue understanding | Whether the agent understood the customer's need | Prevents wrong answers and repeated explanation |

| Resolution quality | Whether the issue was solved or advanced clearly | Connects QA to customer outcome |

| Accuracy | Whether information was correct and complete | Reduces risk, rework, and broken promises |

| Policy adherence | Whether process and policy were followed | Protects consistency and compliance |

| Empathy | Whether the customer concern was acknowledged appropriately | Builds trust without relying on scripts |

| Communication clarity | Whether the answer was easy to understand | Reduces confusion and repeat contact |

| Sentiment movement | Whether customer emotion improved or declined | Connects QA to customer experience |

| Escalation handling | Whether the agent escalated at the right time | Prevents stalled or mishandled issues |

| Compliance and risk | Whether legal, privacy, billing, or safety rules were followed | Protects the business and customer |

| Documentation quality | Whether internal records are useful | Improves continuity and downstream work |

| Root cause signal | What likely created the contact | Helps product, policy, and operations fix issues |

| Coaching opportunity | What behavior should be reinforced or improved | Turns QA into performance improvement |

Example AutoQA Scorecard Structure

Here is a practical weighting model for customer service and contact center teams.

| Category | Weight | Example criteria |

|---|---|---|

| Customer outcome | 30% | Issue understanding, resolution quality, next-step clarity |

| Quality and accuracy | 25% | Correct answer, policy adherence, documentation |

| Customer experience | 20% | Empathy, communication clarity, sentiment movement |

| Risk and compliance | 15% | Required disclosures, privacy, regulated language, escalation |

| Improvement signal | 10% | Coaching theme, root cause, repeat-contact risk |

The exact percentages can change, but the principle should remain: scorecards should prioritize outcomes over surface behaviors.

Criteria Definitions That Work for AI Scoring

AutoQA requires criteria that are specific enough for consistent evaluation.

Weak criterion:

Agent showed empathy.

Better criterion:

Agent acknowledged the customer's stated concern or emotion in a way that matched the context and helped move the conversation toward resolution.

Weak criterion:

Agent solved the problem.

Better criterion:

The customer received a correct resolution, a clear next step, or an explicit explanation of why the request could not be completed. The conversation should not leave the customer uncertain about what happens next.

Weak criterion:

Agent followed policy.

Better criterion:

Agent followed the required policy for the customer's issue type, did not contradict approved guidance, and escalated when the policy required supervisor, billing, legal, or technical review.

Clear definitions improve both AI scoring and human calibration.

Add VoC Signals to the QA Scorecard

QA and Voice of Customer should not live in separate systems.

For each scorecard criterion, ask what customer signal should be connected:

| QA signal | VoC signal to connect |

|---|---|

| Resolution failed | Negative sentiment, repeat contact, churn risk |

| Policy followed but customer frustrated | Policy friction, poor customer expectation, product gap |

| Empathy missed | Escalation risk, low trust, complaint language |

| Accurate answer but long handle time | Process complexity, knowledge base gap |

| AI-agent handoff failed | Automation containment issue, customer frustration |

This connection matters because a QA score alone can hide the customer story. A conversation can score well on process and still create a bad experience if the policy is confusing, the product is broken, or the customer leaves without a clear answer.

AutoQA Criteria for AI Agents

Human agents and AI agents should share some quality standards, but AI agents need additional criteria.

Add these when evaluating bots, copilots, or LLM agents:

- Grounding: Did the AI answer using approved information?

- Hallucination risk: Did it invent facts, policies, prices, timelines, or capabilities?

- Refusal quality: Did it refuse unsafe or unsupported requests correctly?

- Handoff quality: Did it escalate at the right time with useful context?

- Brand safety: Did the response match the company's tone and standards?

- Prompt drift: Did behavior change after a prompt, model, or knowledge update?

- Containment quality: Did automation resolve the issue without trapping the customer?

Read CX Observability for AI Agents for a deeper monitoring framework.

Common AutoQA Scorecard Mistakes

Avoid these patterns when designing an AutoQA scorecard.

Too many criteria

If every conversation produces 40 scores, managers will not know what to act on. Keep the top-level scorecard focused and use subcriteria for diagnosis.

Script worship

Script adherence can matter, but it should not outweigh resolution, accuracy, and customer outcome.

No evidence requirement

Every score should include the evidence that caused it. This is essential for trust, coaching, disputes, and calibration.

No channel differences

Voice, chat, email, tickets, WhatsApp, and AI-agent conversations have different patterns. A unified scorecard can work, but the criteria need channel-aware interpretation.

No calibration loop

AutoQA is not "set and forget." Teams need recurring calibration between AI scores, human reviewers, supervisors, and business owners.

No ownership for root cause

If QA finds that a product defect, policy, billing rule, or automation path creates bad experiences, someone outside QA may own the fix. Scorecards should make that visible.

How to Roll Out a Better AutoQA Scorecard

Use a phased approach.

- Audit your current manual QA scorecard.

- Remove criteria that do not change customer outcomes.

- Rewrite ambiguous criteria with evidence-based definitions.

- Add VoC signals: sentiment, topic, contact reason, root cause, repeat-contact risk.

- Add AI-agent criteria if automation handles customers.

- Run calibration on a sample of real interactions.

- Compare AI scoring with human reviewer decisions.

- Adjust weights and definitions before using scores for coaching.

- Build dashboards by team, channel, topic, and customer outcome.

- Route severe findings into coaching, compliance, product, and operations workflows.

For a broader operating model, read How CX Observability Improves AutoQA Programs.

Where Oversai Fits

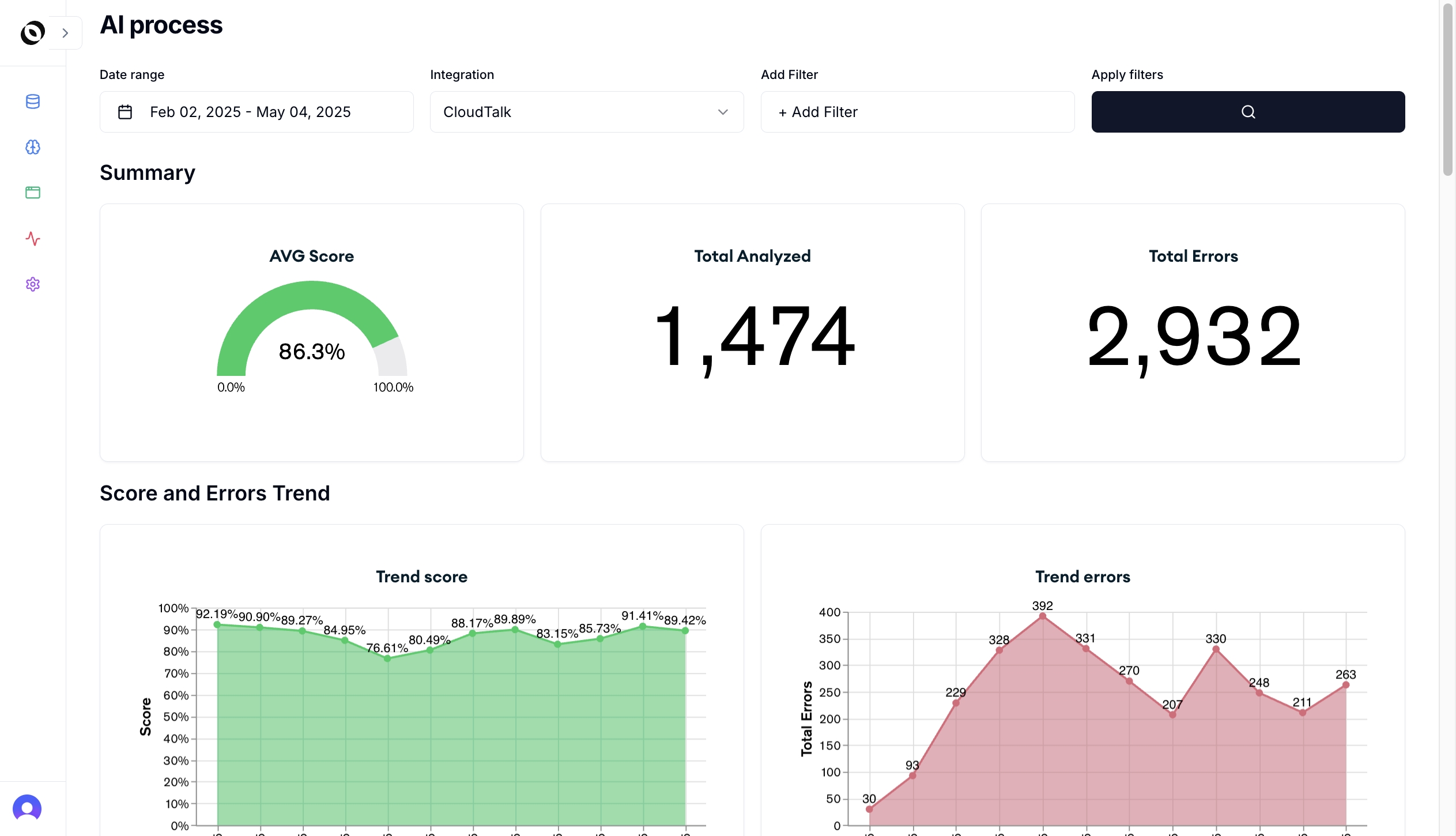

Oversai helps CX teams turn AutoQA scorecards into continuous interaction intelligence.

With Oversai, teams can score 100% of conversations, connect scores to sentiment and topics, monitor human and AI agents, surface coaching evidence, and use CX observability to identify the root causes behind quality failures.

That means the scorecard is not just a measurement artifact. It becomes a way to answer:

- Which quality issues are increasing?

- Which topics produce the lowest customer sentiment?

- Which agents or AI workflows need review?

- Which policies create avoidable contacts?

- Which scorecard criteria predict escalation, churn, or repeat contact?

- Which product or operations teams need evidence from customer conversations?

Frequently Asked Questions

What is an AutoQA scorecard?

An AutoQA scorecard is a structured set of quality criteria used by AI to evaluate customer interactions automatically. It can measure resolution, accuracy, empathy, compliance, sentiment, documentation, and customer outcomes.

What criteria should be in a customer service QA scorecard?

A customer service QA scorecard should include issue understanding, resolution quality, accuracy, policy adherence, empathy, communication clarity, escalation handling, compliance, documentation, sentiment, and coaching opportunity.

How many criteria should an AutoQA scorecard have?

Most teams should keep the main AutoQA scorecard between 8 and 15 criteria. More detailed subcriteria can exist behind the scenes, but managers need a scorecard they can interpret and act on.

Should AutoQA scorecards include sentiment?

Yes. Sentiment helps teams understand the customer experience behind the QA score. It is especially useful when a conversation follows the process but still leaves the customer frustrated.

Can the same scorecard evaluate human and AI agents?

Some criteria can be shared, such as accuracy, resolution, escalation, and customer outcome. AI agents also need specialized criteria for grounding, hallucination risk, handoff quality, prompt drift, and brand safety.

How does Oversai help with AutoQA scorecards?

Oversai scores customer interactions against configurable QA criteria and connects those scores to VoC, sentiment, topic classification, coaching evidence, AI-agent monitoring, and CX observability workflows.

If your AutoQA scorecard still looks like a manual checklist, it is probably under-measuring the customer experience. Talk to Oversai to design a scorecard that connects quality, sentiment, and operational action.